An Object-Centered Data Acquisition Method for 3D Gaussian Splatting using Mobile Phone

Abstract

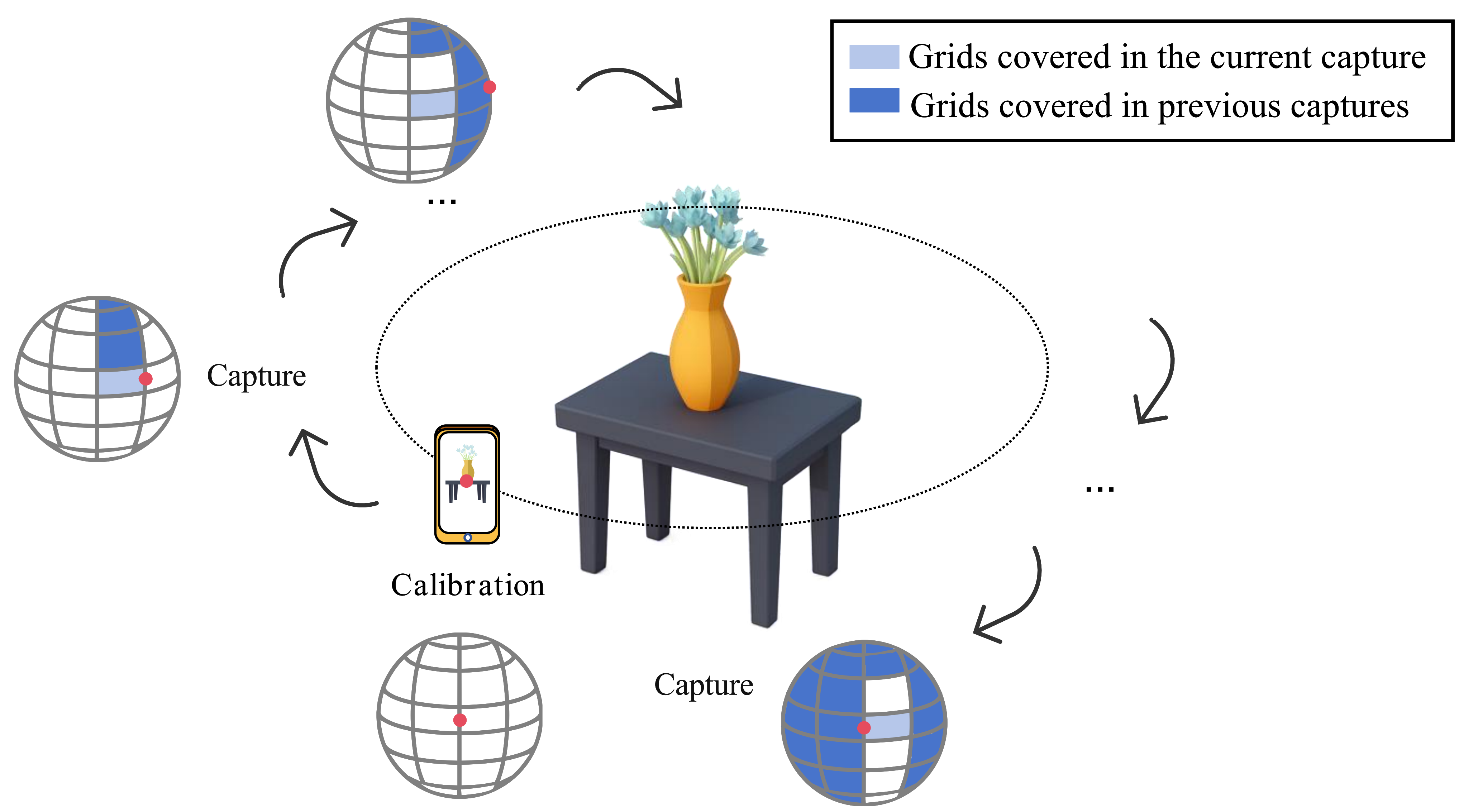

High-quality 3D Gaussian Splatting (3DGS) reconstruction relies heavily on accurate poses and comprehensive viewpoint coverage. We present a mobile, object-centered data acquisition framework that addresses these challenges through on-device guidance and sensor fusion. Our system maps the camera's optical axis to a discretized spherical grid after a one-time calibration. By providing real-time, area-weighted coverage feedback and employing a stability gate based on smoothed IMU signals, we ensure that users capture uniform, blur-free images essential for high-fidelity reconstruction.

Key Contributions

- Spherical Mapping: Transformation of IMU-based device orientations into an object-centered spherical coordinate system.

- Coverage Guidance: Real-time visualization of area-weighted spherical coverage to ensure angular uniformity and completeness.

- Stability Control: A dual-mode stability gate (using linear acceleration and angular velocity) to filter out motion blur and unstable poses.

Experimental Setup

- Mobile Device: Redmi K70 Pro (Data logging & Real-time guidance).

- Workstation: NVIDIA RTX 5090D (Offline 3DGS training & reconstruction).

- Targets: Various tabletop objects with complex geometries and textures.

System Overview & Demonstration

Dataset Objects: The following objects were used to validate our method (miniclawmachine, bearplanter, terracotta warrior replica, coinbank).

Quantitative Evaluation: Free vs. Guided Capture

We conducted a user study comparing unguided "Free Capture" against our "Guided Capture" method. Table 1 highlights that while free capture often results in uneven distribution (biased towards frontal views), our guided method achieves 100% spherical coverage with comparable or fewer images, leading to consistently higher PSNR. (Raw data available here).

| Method / ID | Front (-45°–45°) | Right (45°–135°) | Back (135°–-135°) | Left (-135°–-45°) | PSNR (dB) | |||||

|---|---|---|---|---|---|---|---|---|---|---|

| Img | Cov | Img | Cov | Img | Cov | Img | Cov | 7k | 30k | |

| Free (User 1) | 123 | 73% | 25 | 44% | 51 | 40% | 42 | 52% | 25.92 | 28.77 |

| Free (User 2) | 90 | 69% | 50 | 57% | 79 | 52% | 70 | 73% | 25.23 | 26.95 |

| Free (User 3) | 109 | 48% | 63 | 61% | 46 | 57% | 81 | 48% | 26.86 | 28.89 |

| Free (User 4) | 63 | 65% | 71 | 51% | 50 | 32% | 74 | 52% | 25.67 | 26.81 |

| Free (User 5) | 92 | 61% | 88 | 60% | 93 | 52% | 57 | 61% | 25.61 | 27.02 |

| Free (Avg) | 95 | 63% | 59 | 55% | 64 | 47% | 65 | 57% | 25.86 | 27.69 |

| Ours (Guided) | 82 | 100% | 53 | 100% | 63 | 100% | 60 | 100% | 26.78 | 30.28 |

Pose Accuracy Analysis

Note: This section supplements the manuscript with additional experimental data not included in the main text due to space constraints.

To evaluate the utility of our IMU-based orientation estimates, we compared standard 3DGS training (using COLMAP poses) against a hybrid approach where COLMAP rotations are replaced by our device-calibrated orientations. Table 2 shows that our object-centered orientations yield consistent PSNR improvements, particularly in the early training stages (7k steps).

| Scene | Trial | COLMAP (Origin) | Ours (Hybrid) | Improvement (Avg) | ||

|---|---|---|---|---|---|---|

| 7k | 30k | 7k | 30k | |||

| miniclawmachine | 1 | 24.95 | 27.57 | 25.12 | 27.63 | 7k: +0.59% 30k: +0.02% |

| 2 | 24.82 | 27.57 | 25.00 | 27.68 | ||

| 3 | 25.03 | 27.74 | 25.12 | 27.59 | ||

| avg | 24.93 | 27.63 | 25.08 | 27.64 | ||

| bearplanter | 1 | 26.70 | 28.68 | 26.81 | 28.66 | 7k: +0.25% 30k: +0.10% |

| 2 | 26.73 | 28.71 | 26.74 | 28.64 | ||

| 3 | 26.72 | 28.64 | 26.80 | 28.81 | ||

| avg | 26.72 | 28.67 | 26.78 | 28.70 | ||

| coinbank_bear | 1 | 26.35 | 28.45 | 26.42 | 28.49 | 7k: +0.40% 30k: +0.01% |

| 2 | 26.36 | 28.50 | 26.46 | 28.53 | ||

| 3 | 26.29 | 28.44 | 26.44 | 28.38 | ||

| avg | 26.34 | 28.46 | 26.44 | 28.46 | ||

Supplementary Ablation Study: Impact of Coverage

Note: This section provides supplementary experimental data not included in the main manuscript.

To quantitatively validate the relationship between view coverage and reconstruction fidelity, we conducted an ablation study where training views were progressively removed from a complete (100% coverage) dataset. We maintained a fixed set of "Test" images to ensure a consistent evaluation baseline (Final PSNR).

Observation: As shown in Tables 3 and 4, there is a clear, monotonic downward trend in reconstruction quality (Final PSNR) as the spherical coverage percentage decreases. This degradation confirms that high-density spherical coverage—specifically in traditionally under-sampled regions—is a critical factor for robust 3DGS reconstruction.

| Coverage | Test 7k | Test 30k | Train 7k | Train 30k | Final 7k | Final 30k |

|---|---|---|---|---|---|---|

| 100% | 26.00 | 27.31 | 29.01 | 31.45 | 26.39 | 27.84 |

| 90% | 25.78 | 26.77 | 29.01 | 31.05 | 26.20 | 27.32 |

| 80% | 25.54 | 26.48 | 28.65 | 31.42 | 25.94 | 27.12 |

| 70% | 25.38 | 26.20 | 29.19 | 31.67 | 25.87 | 26.90 |

| 60% | 25.05 | 25.48 | 29.48 | 32.34 | 25.61 | 26.36 |

| 50% | 24.52 | 24.85 | 29.54 | 33.24 | 25.17 | 25.93 |

| 40% | 23.43 | 23.58 | 30.53 | 34.47 | 24.34 | 24.98 |

| Coverage | Test 7k | Test 30k | Train 7k | Train 30k | Final 7k | Final 30k |

|---|---|---|---|---|---|---|

| 100% | 24.71 | 26.22 | 25.94 | 29.16 | 24.87 | 26.60 |

| 90% | 24.20 | 25.33 | 25.68 | 28.53 | 24.39 | 25.74 |

| 80% | 23.98 | 24.87 | 26.15 | 28.95 | 24.25 | 25.39 |

| 70% | 24.17 | 25.01 | 26.44 | 29.99 | 24.46 | 25.65 |

| 60% | 23.67 | 24.27 | 26.66 | 30.37 | 24.05 | 25.05 |

| 50% | 20.01 | 19.93 | 30.75 | 34.58 | 21.39 | 21.81 |

| 40% | 21.35 | 21.25 | 28.48 | 32.77 | 22.26 | 22.72 |

* Note: For brevity, intermediate 5% intervals are omitted from the web view but are available in the full dataset download.

Resources

-

APK Release (v1.1):

Download App & Assets

Includes `camera-6-core-debug.apk` (Full feature) and experimental builds.

Acknowledgements

This project builds upon 3D Gaussian Splatting and the Fossify Camera open-source project.